Extraction, Pressure, and the Limits of Scalable Insight

There is a class of systems in which intelligence becomes self-defeating once it scales.

Not because the intelligence is wrong. Not because the models fail. But because extraction is inseparable from perturbation.

In these systems, insight exists only while it is applied gently. Push too hard, and the structure that made the insight possible erodes. This is not a moral problem. It is a structural one.

Markets belong to this class — though not every strategy reaches the boundary at the same speed, and not every domain with gradients rewards intelligence equally quickly.

1. The Hidden Assumption

Throughout this essay, “intelligence” means the same thing in every domain: the ability to identify, exploit, and systematically amplify a gradient in a complex system.

That gradient may be informational (markets), physical (oil reservoirs, power grids), institutional (tax codes, regulation), or logistical (networks, supply chains). The form differs; the force does not.

Much modern thinking quietly assumes a separation between knowing and acting. We behave as if intelligence can observe a system, extract information, and scale that extraction without altering the system itself.

That assumption holds in static or weakly coupled environments. It fails in feedback-coupled ones.

In such systems, observation requires interaction; interaction alters structure; and scaling induces regime change, not linear improvement. The system tolerates probing, but not sustained pressure.

Automation does not change this structure, but it compresses the timescale: what once took years of primary extraction may now be exhausted in moments, making unrestrained intelligence catastrophic rather than merely erosive.

The limit is not cognitive. It is structural.

2. Two Kinds of Landscapes

To understand the limit, we need a simple taxonomy — not about epistemology, but about what happens when intelligence scales.

Type I: Weakly coupled landscapes

- Analysis minimally alters the environment

- Computation scales with limited back-reaction

- Structure largely survives scrutiny

Examples:

- Mathematics

- Formal optimisation problems

Type II: Feedback-coupled landscapes

- Observation changes dynamics

- Exploitation alters the payoff surface

- Scaling erodes the very structure being exploited

Examples:

- Financial markets

- Ecosystems under harvesting

- Adversarial regulatory systems

The distinction is not philosophical. It is about capacity limits under scaling.

3. Why “Alpha” Is the Wrong Metaphor

Finance treats alpha as if it were a resource: something you find, bottle, and scale.

This is a category error.

Alpha is not a substance. It is a gradient.

It exists only while the system is lightly perturbed. As extraction increases, the gradient flattens — not because intelligence weakens, but because the environment adapts.

Different strategies encounter this limit at different capital thresholds.

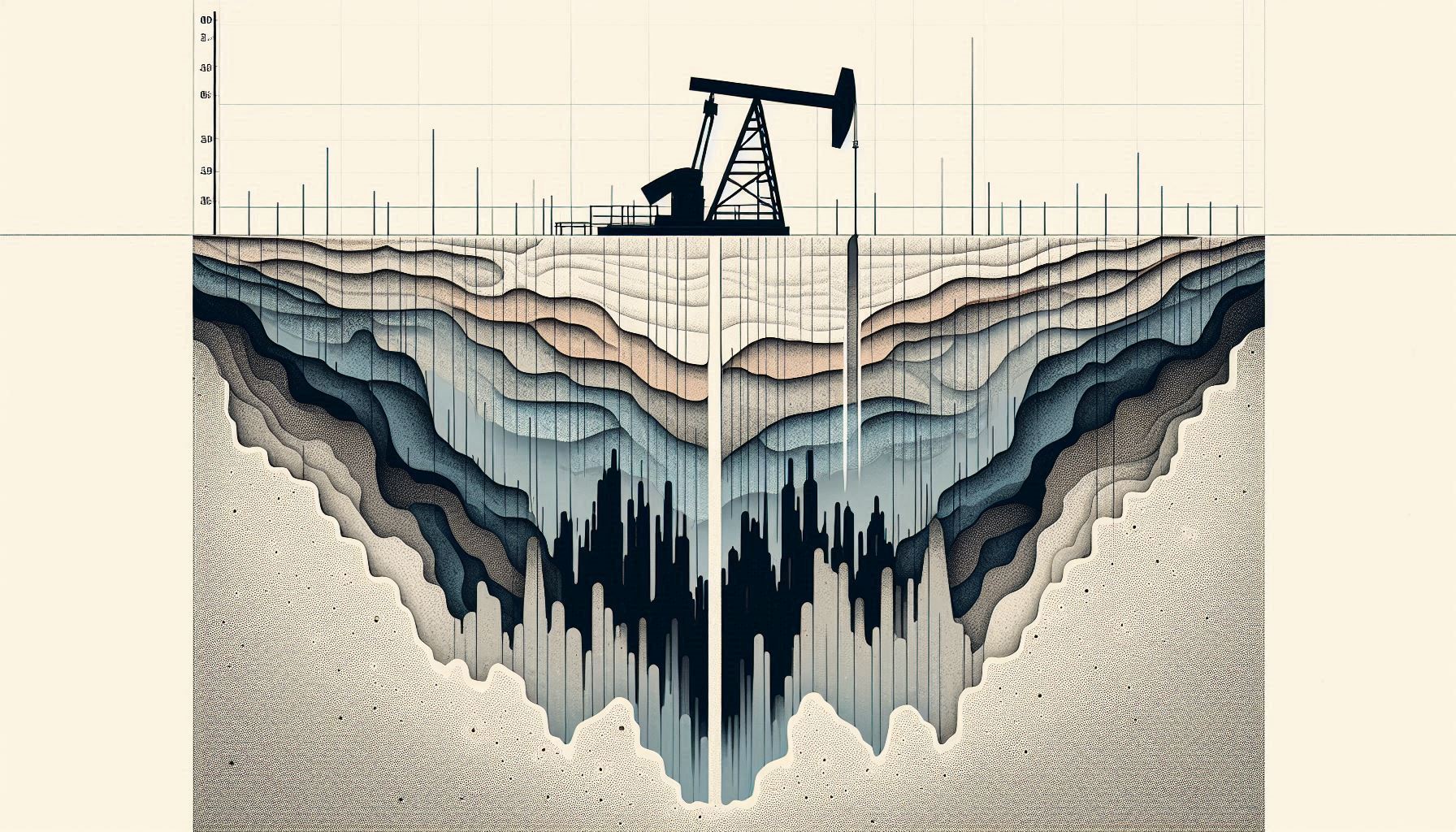

4. The Petroleum Engineering Analogy

Petroleum extraction provides the cleanest physical analogue for what happens to alpha under scale, because it separates discovery, extraction, and environmental redesign with engineering precision.

Primary Recovery: Natural Pressure

An oil reservoir begins pressurised by geology. Oil flows naturally toward wells with minimal intervention. Extraction is cheap, local, and highly profitable.

This corresponds to high-Sharpe, low-capacity strategies: small capital, steep gradients, minimal impact on the environment. Intelligence merely finds what already exists.

Depletion: Extraction Degrades the Gradient

As oil is removed, reservoir pressure drops. Flow slows. Each additional barrel is harder to extract, not because the oil has disappeared, but because extraction itself has degraded the enabling structure.

In markets, this happens faster and more aggressively: arbitrage is competitive, gradients are informational rather than physical, and extraction actively destroys the signal through imitation and price response.

Secondary Recovery: Pressure Maintenance

To continue extraction, engineers inject water or gas to maintain pressure.

This is not discovering new oil. It is intervening in the system to preserve extractability.

Secondary recovery increases total yield — but only by redesigning the environment. It is capital-intensive, fragile, and fundamentally different from primary extraction.

In markets, the analogue would be engineering volatility, preserving informational asymmetries, or structurally maintaining gradients. This is where regulation tightens.

Enhanced Recovery: Environmental Redesign

At the extreme, reservoirs are chemically or thermally altered to force oil out. The field is no longer natural; it has been redesigned around extraction.

Markets explicitly forbid this stage when it serves private extraction.

The legal and regulatory boundary in finance sits exactly here:

- extraction is permitted,

- pressure maintenance is constrained,

- environmental redesign is prohibited.

That boundary explains why alpha scales only so far.

5. Persistence Requires Restraint

The existence of limits does not mean extraction is fleeting.

Some strategies persist for decades because they exercise restraint:

- they remain below capacity thresholds,

- exploit slowly renewing structure,

- and avoid redesigning the environment that feeds them.

This is why Jim Simons’ Medallion Fund worked for so long. It stayed small by design. Capacity was treated as a constraint, not a challenge.

Persistence is achieved not by domination, but by self-limitation.

Even when restraint is rational at the system level, it is often psychologically and institutionally unstable, because individual incentives reward immediate extraction over long-term preservation.

This insight generalises.

6. Adversarial Dynamics and Phase Transitions

In feedback-coupled systems, competition does more than erase signal.

It selects for opacity.

Visible edges are copied and flattened. Surviving edges migrate into secrecy, latency, complexity, or institutional friction. What persists is not the best model, but the hardest one to observe.

As coupling strengthens, systems do not degrade smoothly. They undergo phase transitions.

A canonical example is the 2010 Flash Crash. Market intelligence had optimised normal-time efficiency so thoroughly that the system became hyper-fragile. When stress arrived, liquidity vanished discontinuously, prices collapsed, and recovery required external intervention.

This is what “the system breaks” looks like: not gradual inefficiency, but abrupt loss of function.

7. Why Infrastructure Cannot Exercise Restraint

Infrastructure, logistics, and energy systems do not “fight back” when improved. Gains are cumulative, not self-erasing.

Yet intelligence does not flood into them.

The reason is not a lack of gradients. It is that infrastructure structurally cannot exercise restraint.

Infrastructure creates value only when optimisation becomes common. A trading edge is profitable because others do not use it; an infrastructure improvement matters only when everyone does. Scale is not a side effect — it is the point.

This has three structural consequences.

First, infrastructure intelligence cannot remain small or selective. The moment it works, it demands broad rollout.

Second, success forces visibility. Cables, grids, ports, and rights-of-way are physically anchored and jurisdictionally legible. Optimisation immediately collides with planning law, regulation, and the state.

Third, optimisation destroys its own optionality. Gains are standardised, competitors free-ride, rents collapse, and political bargaining replaces technical optimisation.

A contemporary illustration is renewable energy grid investment. Intelligence applied to generation, storage, and load balancing produces real gains — but once deployed, those gains become public infrastructure, not a defensible edge. Returns flatten precisely because the optimisation succeeds.

This is why early infrastructure intelligence — exemplified by Paul Allen’s repeated investments in fibre and backbone capacity — failed to capture durable rents. The failure was not technical. It was structural.

8. Deliberate Under-Optimisation in Fiscal Systems

Tax enforcement often appears to fail because of weak resources, political hesitation, or legal complexity. This appearance is misleading.

In reality, modern fiscal systems stabilise at a point of deliberate under-optimisation — not because enforcement intelligence is unavailable, but because scaling it further becomes self-destabilising.

The United Kingdom provides a clean illustration. The UK has repeatedly committed to tackling offshore tax abuse, yet has consistently failed to enforce transparency measures — such as public beneficial ownership registers — across its own Overseas Territories, despite clear legal authority and repeated deadlines.

Aggressive enforcement intelligence in a globalised system triggers feedback effects: capital relocation, legal arbitrage, retaliatory policy competition, and concentrated political backlash from embedded financial and legal interests. The legal distinction between avoidance and evasion functions as a pressure-release valve, allowing optimisation without collapse.

Beyond a threshold, enforcement ceases to be stabilising and becomes destructive.

As a result, fiscal systems do not maximise compliance. They select a survivable equilibrium: enough enforcement to maintain legitimacy, but not so much that intelligence destabilises capital flows, institutional networks, or political coalitions.

Markets must restrain themselves to survive. Infrastructure cannot restrain itself. Fiscal systems restrain intelligence by design, even while rhetorically demanding more of it.

9. The Boundary Condition

Some systems allow extraction without redesign. Some systems constrain redesign and therefore self-limit extraction.

Persistence depends on restraint — whether imposed by rules, chosen strategically, or structurally unavailable.

Alpha fades not because intelligence weakens, but because systems break when intelligence refuses to stop.

That is not ideology. That is systems theory.

https://thinkinginstructure.substack.com/p/when-intelligence-breaks-the-systems

Leave a Reply